Disclaimer: This content is for informational purposes only and does not constitute legal or compliance advice. Consult a qualified HIPAA compliance attorney for guidance specific to your organization.

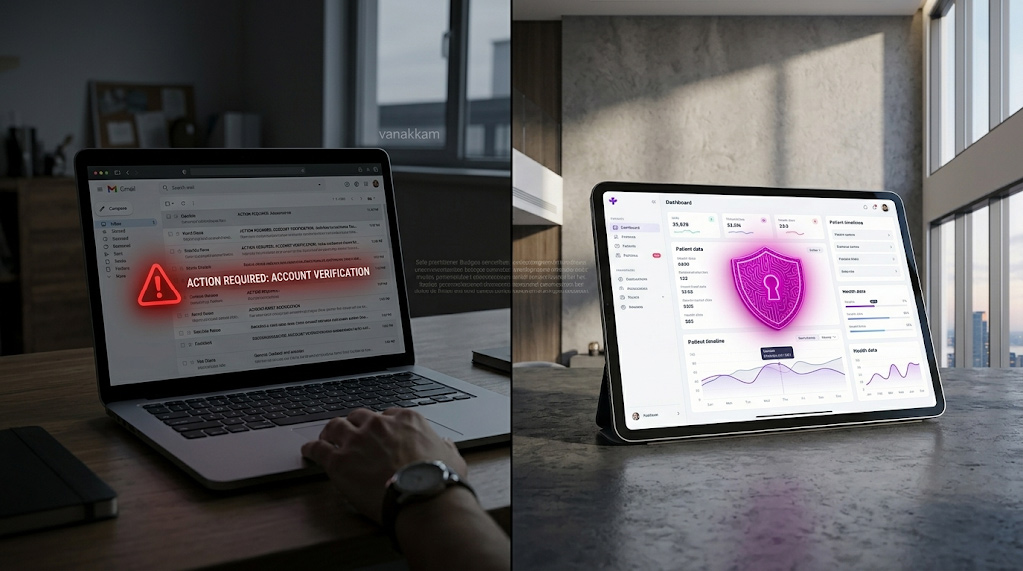

Picture this. A nurse at your clinic is running behind. She has six patient follow-up emails to write before the end of day. Someone in the office mentioned that ChatGPT can do it in seconds, so she opens it up, types in a patient’s name, their diagnosis, and their post-surgery medication list, and asks it to draft the email.

Two minutes later, the emails are done. The nurse feels great about it.

Your clinic just handed protected patient data to a third-party AI company, without a legal agreement, without patient consent, and without any control over what happens to that data next.

That is not a hypothetical. It is happening in clinics across the country every single day. And the federal government is starting to fine organizations for exactly this kind of oversight.

This article explains what is actually going on with AI and patient privacy, why the risks are so easy to miss, and what your team needs to do before your next AI tool goes live. If you want to understand the broader foundations of HIPAA compliance for healthcare organizations, that is a good place to start.

What a Violation Actually Costs

In 2019, the University of Rochester Medical Center paid $3 million to settle a federal investigation. The cause was not a cyberattack or a rogue employee. It was unencrypted laptops and a flash drive that got lost. Devices carrying patient information with no protection on them.

$3M

University of Rochester Medical Center, 2019

Federal settlement for lost unencrypted devices containing patient data. No cyberattack. No malicious intent. Just missing safeguards.

Now think about AI tools. Every time your staff uses an AI assistant, that tool is handling patient information too. The question is: does anyone at your clinic know where that information goes once the AI is done with it?

Most clinics that adopt AI tools have no idea how those tools store, share, or retain patient data. That gap is exactly where violations happen.

HIPAA (the Health Insurance Portability and Accountability Act, the federal law protecting patient privacy) does not make exceptions for new technology. If a tool touches patient data, the same rules apply. Federal regulators confirmed in early 2025 that any AI system handling patient data is subject to the exact same requirements as your medical records system.

Three Ways AI Is Creating Problems Right Now

These are not worst-case scenarios. They are the kinds of mistakes that show up repeatedly across real clinical settings.

Story 1: The Doctor Who Thought the Contract Was Enough

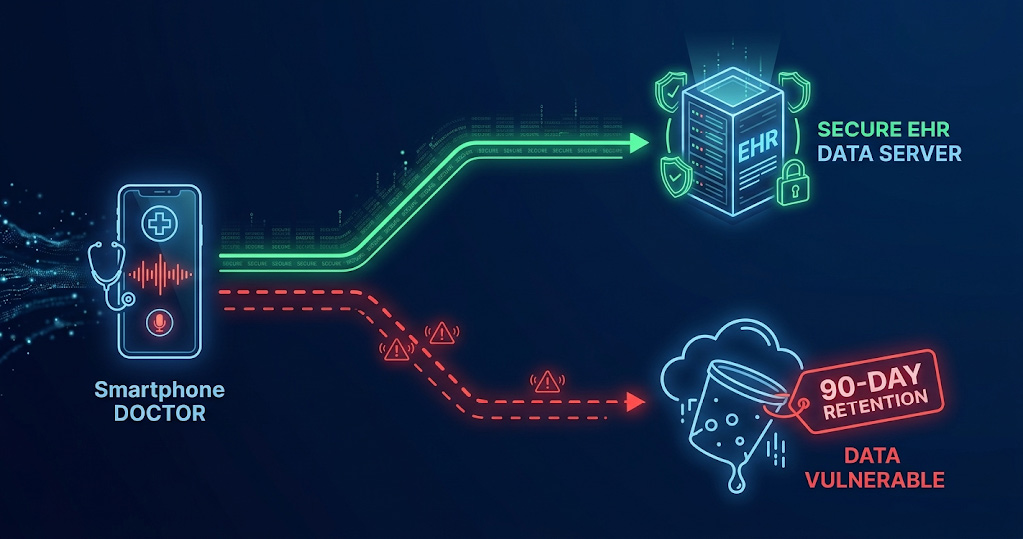

A specialty clinic brings in an AI tool that listens to patient appointments and automatically writes the clinical note. It is a huge time-saver. The IT director checks that the vendor signed the right legal contract, called a Business Associate Agreement (the contract that makes a vendor legally responsible for protecting your patients’ data), and considers the job done.

But nobody looked at the default settings inside the software.

The AI was keeping a full audio recording of every single patient appointment for 90 days. Not just the written note. The actual recording of the conversation, with patients talking about their symptoms, medications, and personal health struggles, sitting on a third-party server for three months after the appointment ended.

Federal law requires clinics to keep only the minimum amount of patient data needed for the job. Once the written note exists, keeping the raw audio recording serves no medical purpose. It is just risk sitting on someone else’s server.

Then it got worse. Medical assistants noticed the AI occasionally got drug names wrong, so they started downloading short audio clips and sharing them through the office messaging app to double-check. Patient recordings, floating around in chat messages, completely outside the secure medical records system.

What this means for you

Signing a contract with an AI vendor is just the starting line. Someone needs to log in to the admin settings, understand what the tool is storing, and turn off everything it does not need to keep.

Story 2: The Shortcut That Opened the Back Door

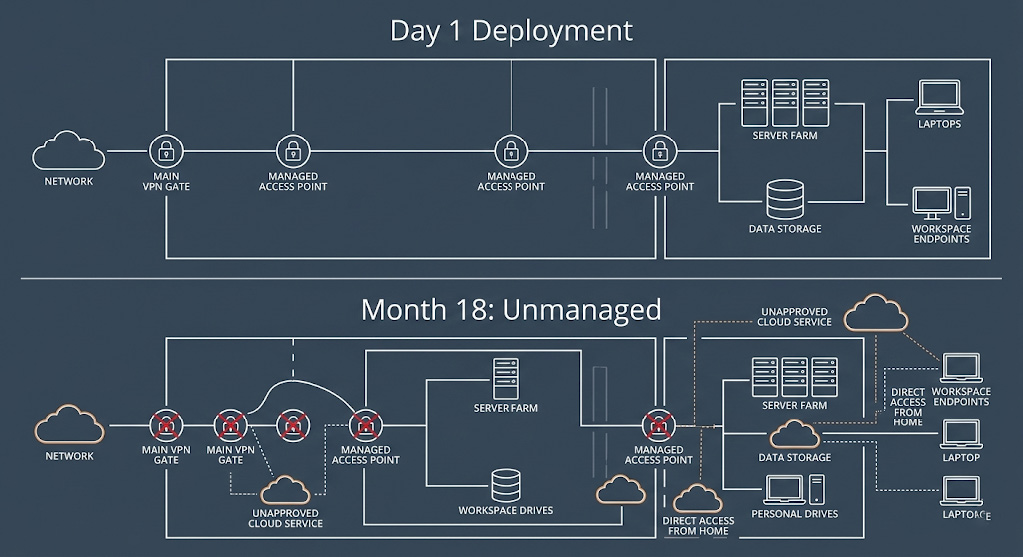

A hospital builds an AI system to predict which patients are at risk of developing sepsis, a serious, fast-moving infection that can turn fatal within hours. The tool works. It saves lives. Everyone is proud of it.

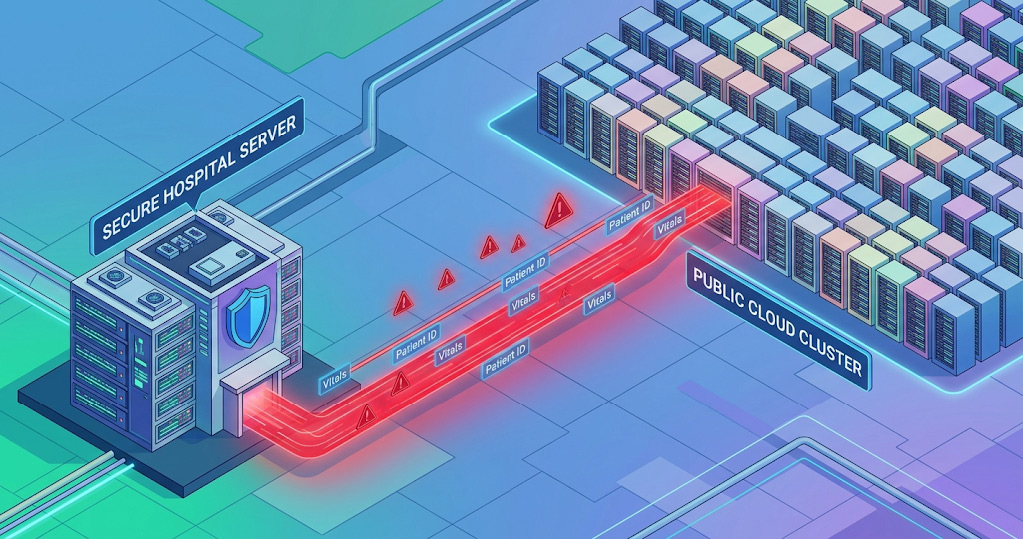

Then the hospital hits a technical problem. Running the AI in real time requires more computing power than their own servers can handle. Someone suggests sending the data out to a large cloud service to do the heavy lifting, then pulling the result back. The data is only in the cloud for a second, after all.

It does not matter. Any time patient data moves from one system to another, even briefly or just to run a calculation, the receiving system needs a legal agreement in place and the data needs to be protected in transit. Neither condition was met.

This is not a hypothetical. When Advanced Care Hospitalists shared patient data with a billing contractor without the required legal contract in place, the federal government fined them $500,000. The data was not stolen. There was no breach. Just a missing contract.

What this means for you

Every system your patient data touches, even for a moment, needs to be covered by a legal agreement and a secure connection. There are no exceptions for tools that only use the data briefly.

Story 3: The Free Tool That Was Never Free

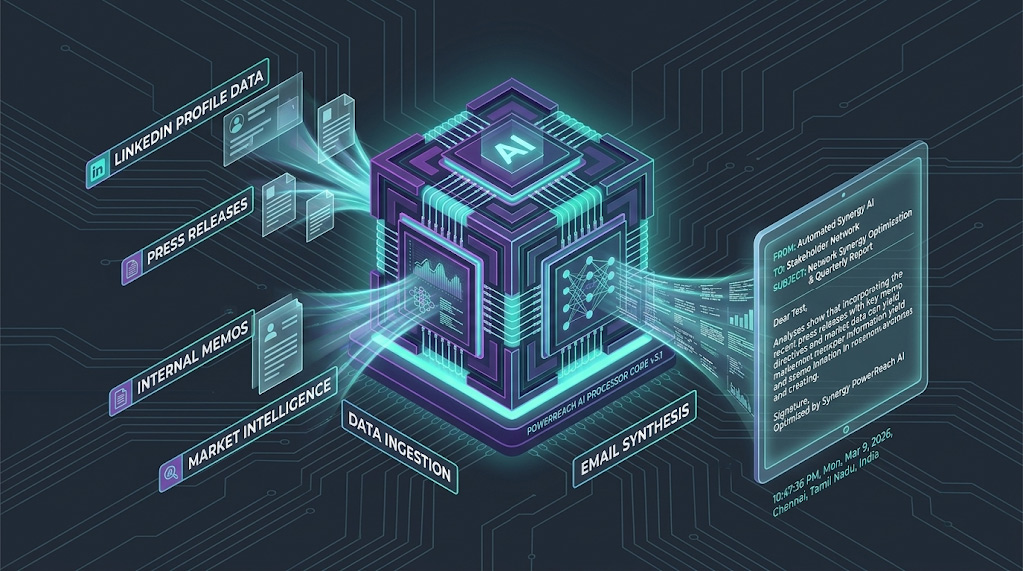

A dental network rolls out a commercial AI chatbot across their front desk computers to help staff draft patient emails and summarize discharge instructions. Quick rollout, no IT department involved, everyone happy.

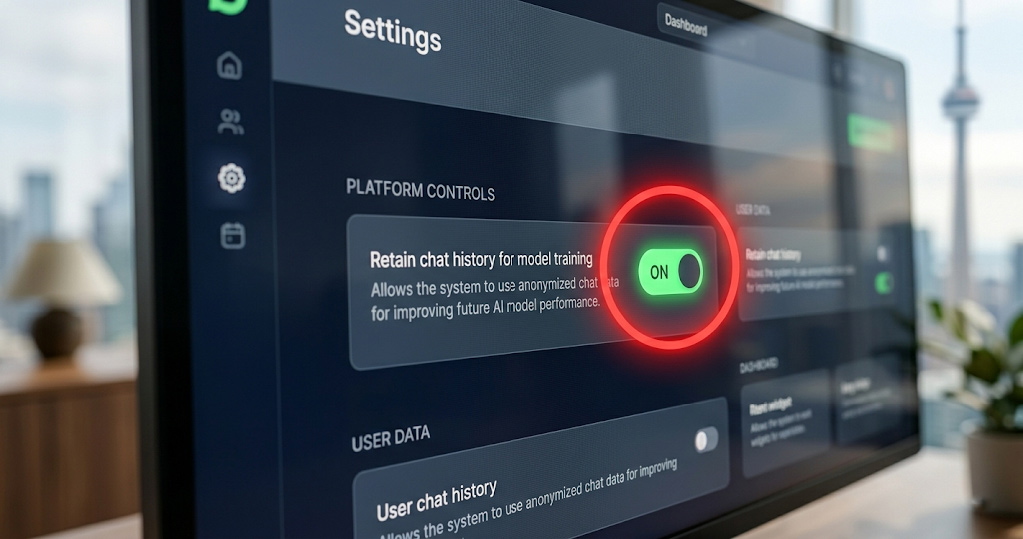

Nobody checked whether they were using the free consumer version or the paid business version.

The consumer version saves every conversation to help the AI learn and improve over time. Every time a staff member typed a patient’s name, their procedure, their follow-up medications. All of it was being stored on the vendor’s servers. Accessible to the company’s own engineers. Being used to train the AI.

Lifespan Health System learned a version of this lesson when patient data ended up on an unprotected device and their settlement cost $1.04 million. The principle is the same: once patient data leaves your controlled environment without the right protections, you are responsible for what happens next.

What this means for you

Free or consumer versions of AI tools are almost never built for healthcare. If your staff is using any AI tool that was not specifically approved and set up by your compliance team, you are likely already exposed.

Why This Keeps Happening to Good Clinics

None of the people in these stories were careless. They were trying to do their jobs better. AI tools are genuinely useful, and the companies selling them often make the compliance process sound simpler than it is. Here is why the problems are so easy to miss:

- AI tools look like regular software, but they are not. Unlike a scheduling app, AI tools are constantly learning from what you feed them. Default settings often involve storing and sharing that input in ways that are not obvious from the outside.

- A signed contract is just the beginning. Many clinics treat the vendor agreement as a compliance checkbox. It is not. The agreement covers who is legally responsible. Actual protection comes from how the tool is configured and monitored day to day.

- Staff solve problems creatively. When a nurse finds a workaround that saves ten minutes, she uses it. Nobody thinks about data privacy when sharing an audio clip to verify a drug name. Training needs to be specific, practical, and repeated regularly.

- The tools move faster than the training. AI has changed faster than most compliance programs have updated. Most violations in this space come from people trying to be efficient, not from bad intentions.

If you want to go deeper on how managed security services can help healthcare organizations stay ahead of these issues, see our guide on managed security services for healthcare clinics.

What Your Clinic Can Do About It

You do not need to stop using AI. You need to use it correctly. Here is a plain-language version of what that looks like.

Before You Bring Any AI Tool Online

1 Get the right legal agreement in place

Every AI vendor that will touch patient data needs to sign a Business Associate Agreement, a legal contract that holds them responsible for protecting that data under federal law. This includes AI scribing tools, chatbots, scheduling assistants, and analytics software. If a vendor refuses to sign one, you cannot legally use their product with patient data.

2 Ask where your data actually goes

Before signing anything, get answers in writing to three questions: Where is our data stored? How long do you keep it? Who can access it? If the vendor is vague, treat that as a warning sign.

3 Use the business version, not the free one

Consumer versions of AI tools are almost never built for healthcare. Always use the enterprise or healthcare-specific version of any tool, and confirm that conversation history and AI training features are turned off before anyone on your team starts using it.

After the Tool Goes Live

4 Train your staff on what not to type

Your team needs to know, clearly and recently, that patient names, diagnoses, medications, and any identifying information should never be typed into an AI tool that has not been approved and configured for healthcare compliance. Make this part of onboarding and annual refresher training.

5 Keep a record of who uses what

Every AI interaction involving patient data should be traceable. This is not about monitoring employees. It is about being able to demonstrate to investigators that you know what happened to patient data at every step. Most enterprise AI tools have this built in. Make sure it is turned on.

6 Check your settings when the tool updates

AI vendors update their products regularly, and default settings can quietly change. A setting you turned off six months ago may have been switched back on. Someone on your team needs to own this and review it regularly.

7 Know what to do when something goes wrong

If patient data is exposed through an AI tool, federal law requires you to notify affected patients and investigators within 60 days. Make sure your team knows this process before they need it, not after.

Quick Reference: AI Tools and What to Watch For

Use this as a starting point when reviewing the tools your clinic already uses.

| AI Tool Type | The Risk in Plain English | What to Do |

| ScribingAI Voice Assistants | May keep audio recordings long after the written note is done. | Ask how long audio is stored. Set it to delete immediately after the note is created. |

| ChatbotsAI Writing Assistants | Free versions save conversations to train the AI, including patient details staff type in. | Use the enterprise version only. Confirm conversation history and data training are off. |

| AnalyticsPredictive Tools | Patient data may be sent to outside servers to run calculations without proper agreements. | Confirm every external system has a signed legal agreement and secure data transfer. |

| TriagePatient Intake Bots | The vendor may have access to symptoms and diagnoses patients shared through the bot. | Check who at the vendor can access your data, and limit it to what is strictly necessary. |

Frequently Asked Questions

Does HIPAA actually apply to AI tools?

Yes, fully. In early 2025, the federal government proposed the first major update to its healthcare data security rules in over 20 years, and AI tools are explicitly included. Any software that handles patient data, regardless of how new or sophisticated it is, falls under the same rules as your medical records system.

What if my staff is already using an unapproved AI tool?

Stop using it for patient data right away and speak with a compliance advisor about whether any information has already been shared externally. Finding the issue yourself is far better than having it discovered during an investigation.

How do we know if our vendor agreement is strong enough?

A solid agreement should specify exactly which AI products are covered, where data is stored, how long it is kept, who can access it, and what happens if there is a breach. If your current contract does not mention AI specifically, it likely needs updating.

Is this really that common?

Far more common than most clinics realize. The problem is not reckless decisions. It is that AI tools have moved faster than compliance training has. Most violations in this space come from staff trying to be efficient, not from bad intentions.

Ready to Review Your AI Tools?

Most clinics that come to us for a review discover at least one tool handling patient data in a way that was never intended. Most issues are fixable. The hard part is finding them before an investigator does.

Schedule a Free AI Compliance Review

No obligation · Typically completed within 5 business days